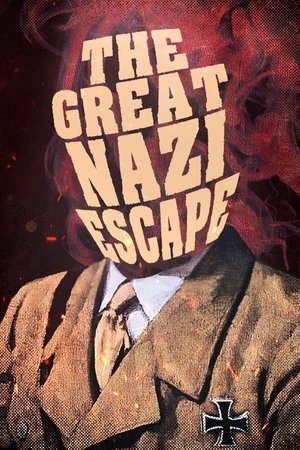

The Great Nazi Escape

Summary

Nazi Germany has fallen. After allied forces defeated Nazi Germany in World War II, Europe became a dangerous place to be associated with the Nazi regime as officers, party members, and supporters of Hitler began to flee Germany.

Danielle Winter

Director

StatusReleased: 2 years ago

January 31, 2023

LanguageUnknown

Spoken LanguagesUnknown

Budget-

Revenue-

Other

Visit website